Now Reading: Leading AI Labs Preview Next‑Gen Models and Multilingual Capabilities at Global Summit

-

01

Leading AI Labs Preview Next‑Gen Models and Multilingual Capabilities at Global Summit

Leading AI Labs Preview Next‑Gen Models and Multilingual Capabilities at Global Summit

In early 2026, artificial intelligence (AI) researchers and labs around the world showcased next‑generation models and advanced multilingual capabilities at major international gatherings of industry leaders, scientists, and policymakers. These events — most notably the 2026 AI Impact Summit in New Delhi — have become platforms where new AI advancements are announced, demonstrating how AI technology is evolving not just in performance, but in global accessibility, language coverage, and cross‑cultural relevance.

A Showcase for Global AI Innovation

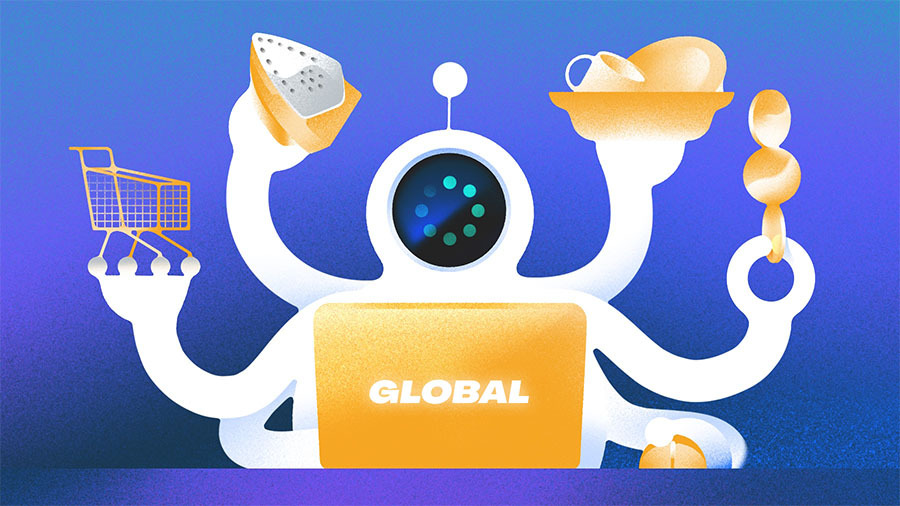

The India AI Impact Summit 2026, held in New Delhi from February 16–21, transformed into a focal point of international AI discourse, drawing participants from technology companies, research institutions, startups, and governments from over 30 countries. The event highlighted how AI research labs and industry teams are prioritizing multilingual and multimodal AI systems — models capable of understanding and generating content across many languages and tasks.

At the summit, several Indian AI labs and organizations unveiled cutting‑edge models addressing key challenges such as linguistic diversity, multimodal processing, and efficient reasoning:

- Sarvam AI, an Indian AI research lab, launched multiple new large language models using a mixture‑of‑experts architecture, including models with 30 billion and 105 billion parameters. These models are designed for tasks across text, speech, and vision, signaling a push toward multimodal intelligence.

- The government‑backed BharatGen Param2 model was introduced — a 17‑billion parameter multilingual AI model supporting 22 Indian languages with multimodal capabilities. This reflects a broader commitment to inclusive AI that can serve a wide range of linguistic communities.

These announcements illustrate how research organizations are not only scaling up model sizes but also tailoring them for language diversity and cultural relevance — a crucial direction as AI systems aim for global utility.

Multilingual Models as a Strategic Priority

The development of multilingual AI has become a priority across regions, especially in places with rich linguistic diversity. For example, Sarvam AI’s mobile and conversational AI product “Indus” integrates multilingual interfaces with text and voice interaction, supporting numerous Indian languages and enabling code‑mixing between languages such as Hindi and English. This reflects growing emphasis on making AI accessible beyond major global languages like English or Mandarin.

Multilingual models aren’t limited to the India summit. In Europe, initiatives like Apertus, developed under the Swiss National AI Initiative, highlight how research labs are building models trained on hundreds of languages to support global use cases in business and academia. These models emphasize safety, accessibility, and compliance with emerging regulatory norms such as the EU’s Artificial Intelligence Act.

Together, these developments point to a broad trend where AI research increasingly recognizes that language inclusivity is a key component of worldwide adoption — and major labs are investing accordingly.

Beyond Language: Multimodal and Cultural AI Systems

Next‑generation AI models are also moving beyond text to integrate speech, vision, and multimodal reasoning. Labs are experimenting with architectures that can interpret visual and spoken inputs in context:

- Voice‑to‑voice foundational models — exemplified by startups like gnani.ai, which debuted a voice conversion and synthesis model at the summit — demonstrate how AI can engage users in natural spoken interaction across languages and dialects.

These systems represent a shift from text‑only AI toward tools that can understand and generate across multiple human modalities, making interactions more intuitive and useful in real‑world settings.

Global Collaboration and the Broader AI Community

Global summits like the one in New Delhi are important not just for announcements, but for building international research ecosystems. Labs from different regions share findings, datasets, and models to advance common goals — such as inclusive AI, language coverage, and ethical deployment. Participation by international delegations and researchers helps ensure that AI development reflects diverse values and perspectives, rather than being concentrated in a few leading tech hubs.

For example, the summit’s broad participation highlighted not only new models and products, but also frameworks for AI governance, safety, and capacity building — particularly for underrepresented regions known as the Global South.

Why These Trends Matter

The transition toward next‑gen multilingual and multimodal AI systems reflects several core trends in AI research today:

- Expanded language coverage helps break down barriers for communities speaking non‑dominant languages, making AI tools more globally applicable and culturally sensitive.

- Multimodal capabilities allow AI to process information in ways that more closely resemble human understanding — combining text, speech, and visual inputs.

- Large‑scale collaborations at summits and research coalitions promote shared progress, ethical standards, and innovation ecosystems across borders.

In 2026, AI labs worldwide are signaling that the future of AI isn’t just about bigger models — it’s about broader inclusion, richer interaction modalities, and shared advancement of capabilities that serve diverse populations across the globe.